Short introduktion on Optimizing I/O

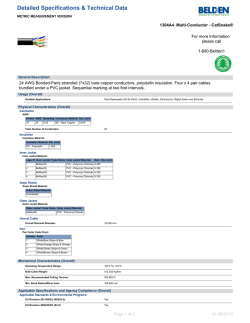

Short introduktion on Optimizing I/O Supercomputing, n. A special branch of scientific computing that turns a computation-bound problem into an I/Obound problem. Building blocks of HPC file systems ● Modern Supercomputer hardware is typically built on two fundamental pillars: The use of widely available commodity (inexpensive) hardware. 1. ● Intel CPUs, AMD CPUs, DDR3, DD4, … Using parallelism to achieve very high performance. 2. ● The file systems connected to computers are built in the same way Gather large numbers of widely available, inexpensive, storage devices 1. ● 2. Can be HDDs, SSDs then connect them together in parallel to create a high bandwidth, high capacity storage device. Challenges in IO ● From an application point of view : ● The tasks of the applications has to be able to make use of the bandwidth the IO system offers ● The number of files created is also an issue ● If your application uses more than 10000 tasks and creates 3 files per task, you will have over 30000 output files to deal with ● Post processing turns into a nightmare ● Also a performance problems ● But the ‘workflow’ is getting more and more important ● How is the created data to be used after the run ● Where is the data stored ● Moving XXX Tbytes of data from /scratch to a permanent place is at best time consuming and at worst impossible ● Which tools are used ● … IO Hierarchy Application High-Level IO Lib • The high level IO interfaces maps your data into the IO subsystem . It should also be portable, both from the interface as well when accessing the data between different hardware systems. HDF5 and netCDF are examples IO Interfaces • This are the interfaces which “only” moves bytes around, this includes the POSIX interface but also more performance ones like MPI-IO IO Transfer • The IO data has to be moved from the compute nodes to the filesystem. Some possibilities are LNET routers for luster or CRAY DVS nodes Parallel Filesystem • The filesystem used on the hardware, like GPFS, Lustre, PanFS, … IO Hardware • The hardware for a parallel filesystem consists not only of the harddrives but also servers and in most cases a dedicated network How can parallel IO be done I/O strategies: Spokesperson ● One process performs I/O ● Data Aggregation or Duplication ● Limited by single I/O process ● Easy to program ● Pattern does not scale ● Time increases linearly with amount of data ● Time increases with number of processes ● Care has to be taken when doing the all-to-one kind of communication at scale ● Can be used for a dedicated I/O Server Lustre clients Bottlenecks 6 I/O strategies: Multiple Writers – Multiple Files ● All processes perform I/O to individual files ● Limited by file system ● Easy to program ● Pattern does not scale at large process counts ● Number of files creates bottleneck with metadata operations ● Number of simultaneous disk accesses creates contention for file system resources 7 I/O strategies: Multiple Writers – Single File ● Each process performs I/O to a single file which is shared. ● Performance ● Data layout within the shared file is very important. ● At large process counts contention can build for file system resources. ● Not all programming languages support it ● C/C++ can work with fseek ● No real Fortran standard 8 I/O strategies: Collective IO to single or multiple files ● Aggregation to a processor in a group which processes the data. ● Serializes I/O in group. ● I/O process may access independent files. ● Limits the number of files accessed. ● Group of processes perform parallel I/O to a shared file. ● Increases the number of shares to increase file system usage. ● Decreases number of processes which access a shared file to decrease file system contention. 9 Special case : Standard output and error ● On most systems the output streams stdout and stderr from the MPI processes are routed back to the stdout and stderr of mpirun ● You cannot expect this to happen aprun mpirun everywhere ● On a Cray XC all STDIN, STDOUT, and STDERR I/O streams serialize through aprun ● Disable debugging messages when running in production mode. ● “Hello, I’m task 32,000!” ● “Task 64,000, made it through loop 24.” 10 Serial IO Optimization We really prefer you to write parallel IO, but if you have to use serial one … Problem ● Application produced massive serial IO ● A generic solution for serial IO is buffering. ● Default Linux buffering offers no control. ● Other solutions: ● Moving part of the IO to /tmp , which resides in the memory or is local ● i.e. changing the source code ● With cce options for assign available ● Changing the IO pattern ● Rewriting the algorithm ● No source code available, only object files Possible solutions by : ● Buffering of the Intel Compiler ● IOBUF 12 IOBUF ● Officially supported by Cray ● Only linked to the application, no source code changes necessary ● IOBUF_PARAMS runtime environment variable controls buffering ● Allows a profiling of the IO on a file level (verbose option) ● Default settings can be changed by several options: ● Number of buffers used (count) ● Verbosity ● Size of buffers ● Direct IO ● Individual options for each file ● Synchronous IO or group of files specified ● Bypass size 13 IOBUF Sample : Larger IOBUF settings IOBUF parameters: file="fort.11.pe267":size=10485760:count=4: vbuffer_count=409:prefetch=1:verbose:pe_label:line_buffer PE 0: File "fort.11.pe267" Calls Seconds Megabytes Megabytes/sec Avg Size Read 740 0.290488 1086.503296 3740.275199 1468248 Write 782 0.293579 1148.194384 3911.019025 1468279 Total I/O 1522 0.584067 2234.697680 3826.099036 1468264 Open 1 0.127007 Close 1 0.023266 Buffer Read 158 0.007295 0.000000 0.000000 0 Buffer Write 1 0.017348 4.398216 253.527960 4398216 I/O Wait 1 0.017461 4.398216 251.887092 Buffers used 1 (10 MB) Partial reads 158 ● Wallclock time close to CPU time. ● Total size of file 4.4 MB 14 Buffering of the Intel Compiler ● Compiler Flag: -assume <options> ● [no]buffered_io ● Equivalent to OPEN statement BUFFERED='YES' ● or environment variable FORT_BUFFERED=TRUE ● [no]buffered_stdout ● More control with the OPEN statements ● BLOCKSIZE ● size of the disk block IO buffer ● default=8192 (or 1024 if –fscomp general or all is set) ● BUFFERCOUNT: ● number of buffers used ● default=1 ● Actual Memory used for buffer = BLOCKSIZE × BUFFERCOUNT ● BUFFERED=yes precedence over –assume buffered_io precedence over FORT_BUFFERED=TRUE ● Source code has to be changed for fine tuning. 15 Lustre Lustre Lustre Lustre Lustre Lustre Lustre Lustreopen(unit=12,file=“out.dat) Lustre Lustre Lustre Lustre Lustre Lustre Lustre Lustre Lustre Lustre Lustre Client Client Lustre Client Lustre Client Lustre Client Lustre Client Lustre Client Client Client Client Client Lustre Client Client Client Client Client Client Client Client Client Client Client Client Client High Performance Computing Interconnect Metadata Server (MDS) Object Storage Server (OSS) + Object Storage Target (OST) Object Storage Server (OSS) + Object Storage Target (OST) Object Storage Server (OSS) + Object Storage Target (OST) Object Storage Server (OSS) + Object Storage Target (OST) Object Storage Server (OSS) + Object Storage Target (OST) name permissions attributes location 17 Basic file striping on a parallel filesystem Single logical user file OS/file-system automatically divides the file into stripes Stripes are then read/written to/from their assigned server 18 File decomposition – 2 Megabyte stripes Lustre Client 2MB 2MB 2MB 2MB 2MB 2MB 2MB 2MB 3-0 5-0 7-0 11-0 3-1 5-1 7-1 11-1 11-1 3-1 5-1 3-0 11-0 7-1 7-0 5-0 OST 7 OST 3 OST 5 OST 11 Tuning the filesytem: Controlling Lustre striping ● lfs is the Lustre utility for setting the stripe properties of new files, or displaying the striping patterns of existing ones ● The most used options are ● setstripe – Set striping properties of a directory or new file ● getstripe – Return information on current striping settings ● osts – List the number of OSTs associated with this file system ● df – Show disk usage of this file system ● For help execute lfs without any arguments $ lfs lfs > help Available commands are: setstripe find getstripe check ... Sample Lustre commands: lfs df stefan66@beskow-login2:~> lfs df -h UUID bytes scania-MDT0000_UUID 899.8G scania-OST0000_UUID 28.5T scania-OST0001_UUID 28.5T scania-OST0002_UUID 28.5T scania-OST0003_UUID 28.5T Used 17.9G 4.2T 4.5T 3.7T 4.0T filesystem summary: 16.3T 114.0T UUID rydqvist-MDT0000_UUID rydqvist-OST0000_UUID rydqvist-OST0001_UUID rydqvist-OST0002_UUID rydqvist-OST0003_UUID bytes 139.7G 14.5T 14.5T 14.5T 14.5T filesystem summary: 58.2T UUID klemming-MDT0000_UUID klemming-OST0000_UUID klemming-OST0001_UUID klemming-OST0002_UUID … klemming-OST001a_UUID klemming-OST001b_UUID klemming-OST001c_UUID bytes 824.9G 21.3T 21.3T 21.3T Used 76.5G 20.1T 20.6T 20.7T 21.3T 21.3T 21.3T 20.1T 20.3T 20.4T filesystem summary: stefan66@beskow-login2:~> 618.4T Used 6.9G 6.7T 6.7T 6.7T 6.7T 27.0T 588.0T Available Use% Mounted on 822.0G 2% /cfs/scania[MDT:0] 24.0T 15% /cfs/scania[OST:0] 23.7T 16% /cfs/scania[OST:1] 24.6T 13% /cfs/scania[OST:2] 24.2T 14% /cfs/scania[OST:3] 96.5T 14% /cfs/scania Available Use% Mounted on 123.2G 5% /cfs/rydqvist[MDT:0] 7.1T 49% /cfs/rydqvist[OST:0] 7.1T 49% /cfs/rydqvist[OST:1] 7.1T 49% /cfs/rydqvist[OST:2] 7.1T 49% /cfs/rydqvist[OST:3] 28.2T 49% /cfs/rydqvist Available Use% Mounted on 693.4G 10% /cfs/stub1[MDT:0] 1.1T 95% /cfs/stub1[OST:0] 547.9G 97% /cfs/stub1[OST:1] 369.7G 98% /cfs/stub1[OST:2] 1011.6G 854.9G 692.0G 24.2T 95% /cfs/stub1[OST:26] 96% /cfs/stub1[OST:27] 97% /cfs/stub1[OST:28] 96% /cfs/stub1 lfs setstripe ● Sets the stripe for a file or a directory ● lfs setstripe “STRIPESETTING” <file|dir> where “STRIPESETTING” can be: ● --stripe-count: ● --stripe-size: ● --stripe-index: Number of OSTs to stripe over (0 default, -1 all) Number of bytes on each OST (0 filesystem default) OST index of first stripe (-1 filesystem default) (there are more arguments) ● Comments ● Can use lfs to create an empty file with the stripes you want (like the touch command) ● Can apply striping settings to a directory, any new created “child” will inherit parent’s stripe settings on creation. ● The stripes of a file is given when the file is created. It is not possible to change it afterwards. ● The start index is the only one you can specify, starting with the second OST you have no control over which one is used. Sample Lustre commands: striping crystal:ior% mkdir tigger crystal:ior% lfs setstripe -s 2m -c 4 tigger crystal:ior% lfs getstripe tigger tigger stripe_count: 4 stripe_size: 2097152 stripe_offset: -1 crystal% cd tigger crystal:tigger% ~/tools/mkfile_linux/mkfile 2g 2g crystal:tigger% ls -lh 2g -rw------T 1 harveyr criemp 2.0G Sep 11 07:50 2g crystal:tigger% lfs getstripe 2g 2g lmm_stripe_count: 4 lmm_stripe_size: 2097152 lmm_layout_gen: 0 lmm_stripe_offset: 26 obdidx objid objid group 26 33770409 0x2034ba9 0 10 33709179 0x2025c7b 0 18 33764129 0x2033321 0 22 33762112 0x2032b40 0 Select best Lustre striping values ● Selecting the striping values will have a large impact on the I/O performance of your application ● Rule of thumb: 1. #files > # OSTs → Set stripe_count=1 You will reduce the lustre contention and OST file locking this way and gain performance 2. #files==1 → Set stripe_count=#OSTs Assuming you have more than 1 I/O client 3. #files<#OSTs → Select stripe_count so that you use all OSTs Example : Write 6 files to 60 OSTs => set stripe_count=10 ● Always allow the system to choose OSTs at random! Case study: Single-writer I/O ● 32 MB per OST (32 MB – 5 GB) and 32 MB Transfer Size ● Unable to take advantage of file system parallelism ● Access to multiple disks adds overhead which hurts performance 120 100 80 60 1 MB Stripe 40 32 MB Stripe 20 0 1 2 4 16 32 64 128 160 Case study: Parallel I/O into a single file ● A particular code both reads and writes a 377 GB file, runs on 6000 cores ● Total I/O volume (reads and writes) is 850 GB ● Utilizes parallel HDF5 I/O library ● Default stripe settings: count =4, size=1M ● 1800 s run time (~ 30 minutes) ● New stripe settings: count=-1, size=1M ● 625 s run time (~ 10 minutes) MPI I/O interaction with Lustre ● Environment variables can be used to tune MPI I/O performance ● MPICH_MPIIO_CB_ALIGN (Default 2) sets collective buffering behavior ● MPICH_MPIIO_HINTS can set striping_factor and striping_unit for files created with MPI I/O ● Use MPICH_MPIIO_HINTS_DISPLAY to check the settings ● If the writes and/or reads utilize collective calls, collective buffering can be utilized (romio_cb_read/write) to approximately stripe align I/O within Lustre ● HDF5 and NetCDF are implemented on top of MPI I/O and thus can also benefit from these settings HDF5 format dump file from all PEs Total file size 6.4 GB. Mesh of 64M bytes 32M elements, with work divided amongst all PEs. Original problem was very poor scaling. For example, without collective buffering, 8000 PEs take over 5 minutes to dump. Tested on an XT5, 8 stripes, 8 cb_nodes 1000 Seconds w/o CB CB=0 100 CB=1 CB=2 10 1 PEs Asynchronous I/O ● Double buffer arrays to allow computation to continue while data is flushded to disk 1. Asynchronous POSIX calls ● 2. Use 3rd party libraries ● 3. Not currently supported by Lustre Typically MPI I/O Add I/O servers to application ● ● ● Dedicated processes to perform time consuming operations More complicated to implement than other solutions Portable solution (works on any parallel platform) I/O servers ● Successful strategy deployed in several codes ● Has become more successful as number of nodes has increased ● Extra nodes only cost few percents of resources ● Requires additional development that can pay off for codes that generate large files ● Typically still only one or a small number of writers performing I/O operations ● may not reach full I/O bandwidth Naive I/O Server pseudo code Compute Node do i=1,time_steps compute(j) checkpoint(data) end do subroutine checkpoint(data) MPI_Wait(send_req) buffer = data MPI_Isend(IO_SERVER, buffer) end subroutine I/O Server do i=1,time_steps do j=1,compute_nodes MPI_Recv(j, buffer) write(buffer) end do end do MPI-IO Profiling Cray MPI-IO Performance Metrics ● Many times MPI-IO calls are “Black Holes” with little performance information available. ● Cray’s MPI-IO library attempts collective buffering and stripe matching to improve bandwidth and performance. ● User can help performance by favouring larger contiguous reads/writes to smaller scattered ones. ● Starting with v7.0.3 Cray MPI-IO library now provides a way of collecting statistics on the actual read/write operations performed after collective buffering ● Enable with: export MPICH_MPIIO_STATS=2 ● In addition to some information written to stdout it will also provide some cvs files which can be analysed by a provided tool called cray_mpiio_summary MPI-IO Performance Stats Example output ● Running wrf on 19200 cores : | MPIIO write access patterns for wrfout_d01_2013-07-01_01_00_00 | independent writes =2 | collective writes = 5932800 | system writes = 99871 | stripe sized writes = 99291 | total bytes for writes = 104397074583 = 99560 MiB = 97 GiB | ave system write size = 1045319 | number of write gaps = 2 | ave write gap size = 524284 | See "Optimizing MPI I/O on Cray XE Systems" S-0013-20 for explanations. Best performance when avg write size > 1MB and few gaps. Careful selection of MPI types, file views and ordering of data on disk can improve this. Wrf, 19200 cores run Number of MPIIO calls over time Wrf, 19200 cores : Number of system writes&Read Wrf, 19200 cores : Number of stripesize aligned system write calls Wrf, 19200 cores : Number of stripesize aligned system read calls Wrf 19200 cores run, Shows how many files are open at any given time Rules for good application I/O performance 1. Use parallel I/O 2. Try to hide I/O (use asynchronous I/O) 3. Tune file system parameters 4. Use I/O buffering for all sequential I/O I/O performance: to keep in mind ● There is no “one size fits all” solution to the I/O problem ● Many I/O patterns for well for some range of parameters ● Bottlenecks in performance can occur in different places ● application level ● filesystem ● Going to extremes with an I/O pattern will typically lead to problems ● I/O is a shared resource: except timing variation Summary ● I/O is always a bottleneck when scaling out ● You may have to change your I/O implementation when scaling up ● Take-home messages on I/O performance ● Performance is limited for single process I/O ● Parallel I/O utilizing a file-per-process or a single shared file is limited at large scales ● Potential solution is to utilize multiple shared file or a subset of processes which perform I/O ● A dedicated I/O Server process (or more) might also help ● Use MPI I/O and/or high-level libraries (HDF5, NETCDF, ..) ● Set the Lustre striping parameters!

© Copyright 2026